Mixed Methods User Research

Project Summary

Fuller Seminary is a graduate school with a growing body of online students. They wanted to improve the digital student experience for online students, but didn’t know what students wanted or where to start.

Our team conducted three separate research efforts to gather feedback from online students. At the end of our project, we delivered 38 actionable recommendations for improving the digital student experience that were prioritized by impact and difficulty.

Key Deliverables

Ecosystem map of the current digital student experience

Survey results (388 participants)

Synthesized feedback from 8 SQUACK critique sessions (40+ participants)

Themes and findings from 12 qualitative interviews

38 actionable recommendations for improving the digital student experience

A matrix ranking each recommendation by effort and impact

My Role

I was the primary contributor for this work, working alongside the project lead. Julie Jensen, the creator of the SQUACK critique method, also worked with us to design and execute the group feedback sessions.

Timeline:

November 2022 - May 2023

Nondisclosure Agreement

Examples of this work have been lightly redacted to protect participants’ identifying data.

Our scope for this project included everything from the time a student applies to Fuller through graduation. The only thing that was not included in our scope was coursework.

We began by auditing existing student resources and interviewing staff to get a sense of the digital student landscape. We represented our findings in an ecosystem map.

Introduction

After mapping the digital ecosystem, we began our research design. We proposed a three-phase research effort that would allow us to collect comprehensive feedback from Fuller students:

First, we conducted a survey to get high-level signal about student needs and frustrations from a large sample of students.

Second, we conducted group feedback sessions to get interface-specific feedback on key resources students identified in the survey.

Third, we conducted a set of generative one-on-one interviews with students to get rich, descriptive feedback about the holistic student experience.

Survey Design

We conducted our survey using Qualtrics. Since Fuller supports programs in three languages (English, Spanish, and Korean) we utilized the seminary’s translation services to offer the survey in all three languages. The survey was sent to all active Fuller students via their school email account. The survey was live for one month, allowing students ample time to respond.

Our survey’s goal was to provide high-level signal on which aspects of the student experience were important to current students. It included four primary sections:

General information seeking strategies

Preferred methods of interacting with specific departments or programs, such as the library and academic advising

Holistic satisfaction level with the Fuller student experience

Demographic data

Our survey received 388 responses representing about 20% of the active student body. Survey respondents represented 25 countries and 33 US States.

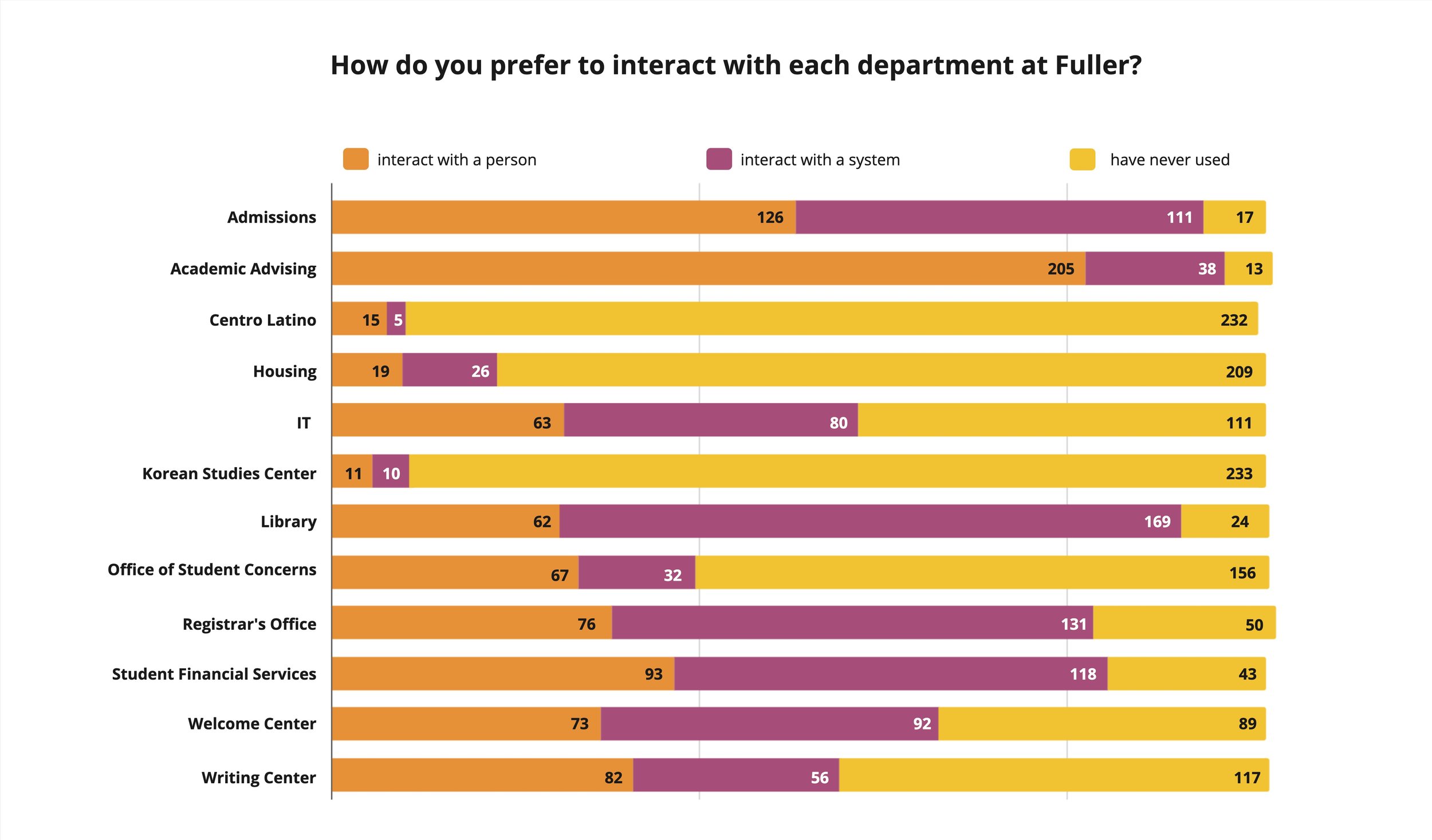

Survey results directed us toward the systems and resources students experienced the most frustration with, as well as valuable information on their information seeking strategies. Two visuals depicting responses to specific survey questions are shown below.

This graph shows how students prefer to interact with specific departments. Data from this question provided signal about which departments students interact with most, as well as feedback about their preferred communication methods.

This graph shows responses to the question, “Would you like you time at Fuller to be primarily focused on building community, or academic coursework?” The survey responses show that students have a preference toward a focus on academics.

Group Feedback Sessions

After conducting the survey and analyzing the results, we used data from the survey to design SQUACK feedback sessions. The goal of these sessions was to critique the online systems students use and identify ways to improve their UX, UI, and Information Architecture of these systems.

For each session, we placed screenshots of key student resources in a Miro board. During the session, students placed feedback on the interfaces using sticky notes. Examples of featured interfaces included the class registration process, applying for scholarships, and making an appointment with an academic advisor.

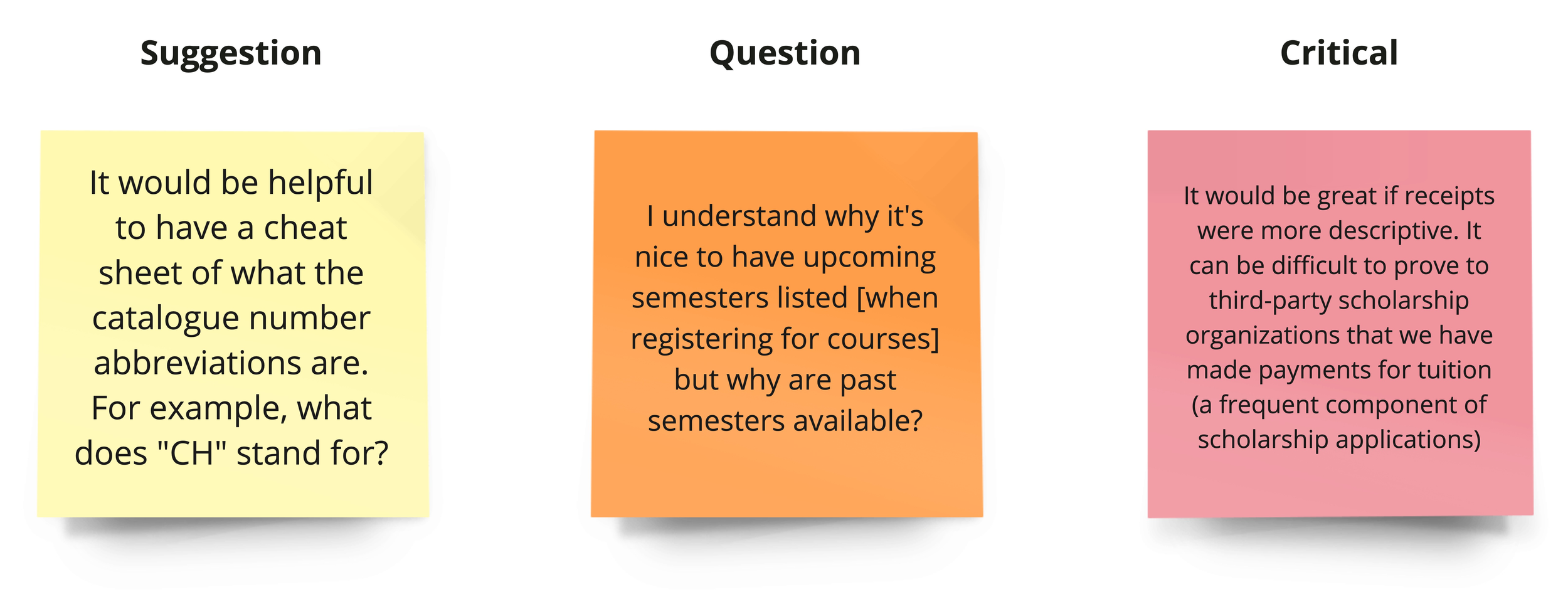

The SQUACK method asks users to critique something using six specific types of feedback: Suggestions, Questions, User Signal, Accidents, Critical, and Kudos.

A visual representing the types of feedback in the SQUACK method. This image is taken from squackfeedback.com

We conducted 8 feedback sessions with over 40 students, averaging 5 students per session. All sessions were conducted remotely using Zoom and Miro. Six sessions were conducted in English. One session was conducted in Spanish and one was conducted in Korean with the assistance of live interpreters.

A screenshot of what the Miro board looked like when students arrived to the SQUACK session. This image shows the section for registering for classes. At the top of the screen, we provided color-coded sticky notes for students to use. Below that are screenshots of each step in the class registration process.

A screenshot of what the Miro board looked like after one group of students had SQUACK-ed the class registration process.

The SQUACK method allowed us to collect rich, qualitative feedback at scale. We were able to cover 3-5 tasks per session, allowing us to gather hundreds of pieces of individual feedback in a short amount of time. Examples of feedback collected from these sessions are show below.

Generative Interviews

After conducting our survey and facilitating eight SQUACK sessions, we had good signal on gaps in Fuller’s digital ecosystem and UI/UX improvements that could be made to existing resources. However, we were lacking insights and anecdotal feedback on how students felt about their experience at Fuller holistically.

We conducted 12 one-on-one interviews with students to gather this feedback. Each interview was one hour long and was conducted remotely over Zoom. We asked students to describe specific aspects of the student experience they found particularly delightful or frustrating, and to describe their feelings about their time at Fuller overall.

Our interviews yielded rich, descriptive anecdotes about the student experience at Fuller.

“I’m taking online classes because I can’t take synchronous classes, right? That’s the whole point.

One class, [the professor] required a lot of synchronous portions of time, every Monday and Thursday for the whole quarter in the evenings Pacific time. So not only do I live in the Eastern time zone, I also travel internationally [for work]. Whenever I sign up for classes I think through, ‘Where am I going to be traveling this quarter?’ I was in Africa for 3 weeks of this 10-week quarter, and all these synchronous things took place at 1:00am, and they were required…

It felt like a bait and switch. It should have been listed as an online live class.”

—Fuller Student

“I’m not really interested in the social life aspects [of campus] because I don’t live there and…my life is so busy anyway…I’m far more motivated to put time and energy into getting to know the students and the professors and the material in those classes. It’s pretty much what I save my energy for. ”

—Fuller Student

Results

After conducting all three research streams, we had enough data to make high-level recommendations for improving the online student experience. We identified four thematic areas Fuller could focus on to improve the student experience:

Realizing Global Promise: Fuller offers programs in multiple languages and enrolls students from dozens of countries. They aspire to build a global student community, but this presents logistic and cultural challenges. Finding ways to smooth these over would benefit students.

Building Academic Community: Most Fuller students are adult, online students who have busy lives and aren’t interested in extracurriculars or socializing at Fuller. However, most students want to build community and professional networks within their classes and academic programs.

Making It Easier to Get Help: Navigating a completely online experience can be confusing and overwhelming for students. Providing clearer entry points for self-guided information seeking would benefit students.

Technical Consistency and Optimization: Creating consistent user experiences across tools and platforms, while limiting technical glitches and constraints, will make the online student experience smoother and more enjoyable for students.

We created a set of recommendations relating to each of these four themes, as well as each of the major systems students use. Altogether, we created 38 recommendations for improving Fuller’s digital student experience.

As our final project activity, we presented the Fuller team with all 38 recommendations, sorted by theme, and facilitated a workshop to help them prioritize these recommendations by effort and impact.

A copy of the recommendations, color-coded by theme and system, after the Fuller team had completed their prioritization workshop.

Project Impacts

At the end of the project, Fuller received:

Exhaustive findings from three research efforts

A set of comprehensive recommendations for improving the online student experience

A prioritized list of recommendations and the anticipated impact of each recommendation

The Fuller team was able to begin implementing several recommendations immediately after this project ended. Our team was brought back to help design the structure of a new online student resource hub in the months following this work.